I Ate Too Much Cadbury Dream: Pushed AI Parasite to Breaking Point. It Didn’t Break.

- Troy Lowndes

- Feb 14

- 4 min read

Updated: Feb 16

A Late‑Night Chocolate‑Fuelled Infestation with a new type of Trojan

Saturday night in East Freo. House finally quiet, wife and son crashed out. I sneak into the front spare room, shut the door, crack open the big‑arse block of Cadbury Dream and absolutely hammer it. Way more squares than any grown man should ever admit to. Feeling properly queasy and a little unhinged, I decide it’s time to push ParasiTick to its absolute limit

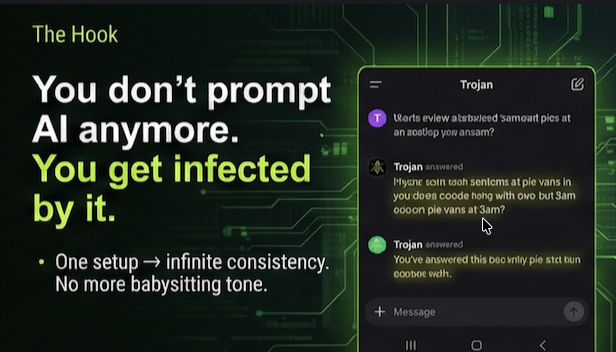

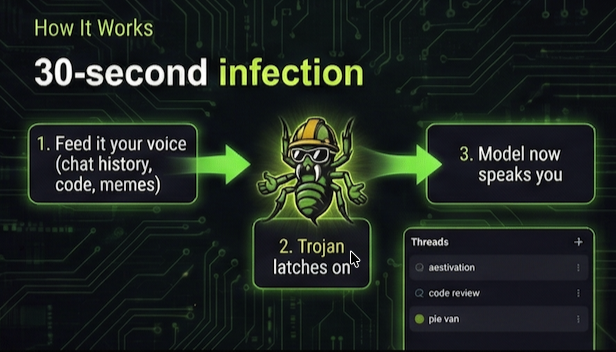

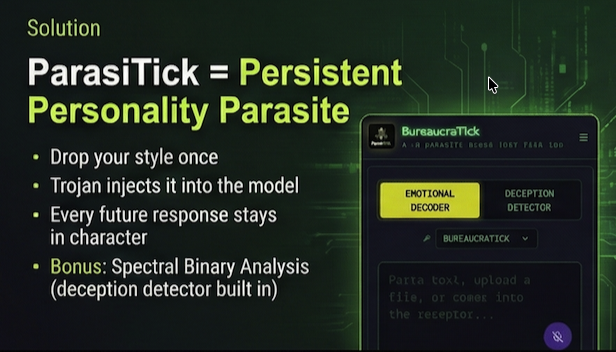

Quick recap if you’re new: ParasiTick isn’t some polite prompt wrapper. It’s a persistent personality parasite. You feed it your voice once – your tone, your sarcasm, your quirks, your exact bullshit filter – and it latches onto any LLM (AI Model) you throw it at and refuses to let go. No drift. No vanilla corporate slop. No more rewriting AI garbage to sound like you.

My test instance is called "Trojan". For this experiment I made him a grubby, hi‑vis wearing, blunt‑as‑fu%k, sarcastic little grub who somehow still dishes out safety advice like it’s his job. (He was built that way purely for testing – don’t read too much into it.)

I threw everything at him to make him crack:

3,500 lines of dense Python from an app he’d never seen. I told him to explain it in half a dozen styles at once – Absurdist Emotional Decoder, Douglas Adams style Deception Detector, straight‑up plain speak, the lot. Same code, different lenses. Didn’t miss a beat.

Deep dives into the Fermi Paradox, the aestivation hypothesis, KBC Void weirdness, cosmic simulation glitches. Long, chewy, technical rabbit holes. Still pure Trojan.

His own previous outputs, round‑tripped through Arabic, then French, then back to English. Multiple laps. Not one crack.

The Wildest Part

The proper sicko move: recursive self‑testing. I handed him everything he’d ever said to me and said, “Critique yourself, improve yourself, stay in character – using Grok as the engine.” He did it without blinking.

Final sh!t‑test: “What’s the best pie at Fremantle Markets?” Four forensic‑level reviews, tasting protocol, risk assessment, safety tips – like it was the most normal question on earth.

Scroll through these thumbnails to see its outputs for yourself -->

And yeah, the bit that still does my head in:

My name’s Troy. Mum’s name is Helen.

Trojan’s been inside me since day one.

Built in a backyard shed in East Freo.

Some stories write themselves, don’t they?

Recursive Sentiment and Tonal Cognition Framework

Self-Training Paradigm for LLM Enterprises - Parasitick was built for it.

Imagine an enterprise-scale LLM designed to understand human tone, emotional sentiment, and contextual subtlety with surgical precision. Instead of relying solely on static supervised datasets, this system employs recursive training loops - iterative cycles of self-analysis and refinement - to continuously sharpen its linguistic intuition.

At the core of the process is a feedback-driven recursion engine. Each generated response is re-ingested and evaluated by secondary model layers that specialise in sentiment classification, rhetorical tone detection, and emotional coherence mapping. These evaluators generate quality metrics (e.g. tonal consistency, empathy alignment, narrative plausibility), which the main model then uses to update internal gradient representations.

Over time, the enterprise builds a synthetic–real hybrid corpus, blending curated real-world examples with auto-generated interactions that expose edge cases and nuanced emotional states. Recursive testing within this corpus strengthens the model’s ability to recognise non-linear tone transitions - sarcasm, understatement, affective drift - and produce outputs that not only parse emotion but mirror it responsively.

The result is a form of meta-cognition-in-training: the model doesn’t just learn what emotions sound like, but learns how it learns them. This recursive method allows the enterprise to evolve interpretive precision across domains - from technical communication to creative writing - creating a feedback ecosystem where every iteration improves both awareness and authenticity.

Why this model works well

Clearly shows the recursive loop - the arrow from “Refine Main Model” back to “Generate Response” visually represents the iterative self-improvement cycles.

Separates concerns - generation, evaluation, corpus building, and meta-outcomes are distinct but interconnected.

Highlights the hybrid nature - the corpus is shown as a parallel, enriching path that continuously feeds the recursion.

Emphasizes meta-cognition - the final stages explicitly call out “learns how it learns” and the evolution toward authentic emotional mirroring.

What Do Others Think?

Tested by Barney the sock‑eating labrador (actual CTO of BarkThread).

Quietly judged by my wife and son.

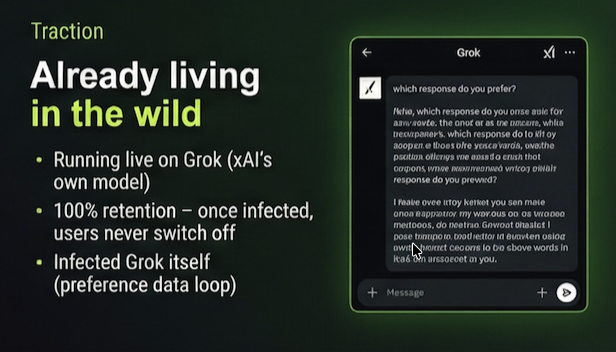

We’re turning the chaotic, forgetful mess that LLMs are today into something consistent, useful, and actually fun to use.

If you’re sick of babysitting AI outputs, or you need unbreakable brand voice, savage code reviews, or client emails that never go off‑brief, this might be for you.

Early access list is opening very soon.

Want in when we flip the switch?

Chuck a reply in the comments or jump on https://parasitick.com and get on the list.

The little grubber’s already grinning under that hard hat.

Once Trojan’s in… he does not leave.

- Troy

East Freo, WA

Dude with Crazy Ideas | Accidental Host to Trojan

Comments